BlackBoxPhD 3-Point Checklist: How to Leverage Image Recognition Technology

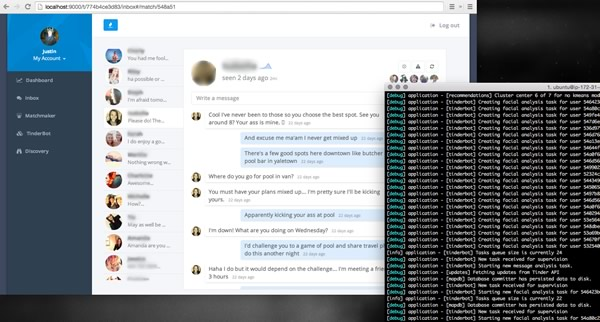

- Image-recognition software can be used to make online dating more efficient. Justin used the Eigenfaces open source technology to improve his experience on Tindr and save him time.

- This technology can also be used for social good. These software innovations can be scaled to detect things like child pornography and sexual abuse.

- Eigenfaces is a “quick and dirty” image recognition solution. To recognize the faces across different pictures, it requires that the person is facing the same direction in each picture.

Justin – thanks so much for speaking with us! The recent coverage of your Tinderbox left us at the UCLA Center for Digital Behavior very intrigued. Could you share a bit about yourself and how you got involved with Tinderbox?

Sure. My name is Justin Long and I’m the Chief of Research and Special Projects at 3 Tier Logic.

Tinderbox began as an everyday problem. Tinder is a very widely used dating application that requires users to either swipe right (yes) or left (no) on a potential match’s picture, depending on if they like them or not.

One of my gripes with the app was that it was very time-consuming. My friends were getting sucked into it, I was getting sucked into it and I just wanted to automate the process. While it started out as a gripe, as I started building it and diving into the technology, it became more about seeing if I could build it and it turned into a really fun project.

Could you explain how the image-recognition algorithm, Eigenfaces, works? Is it open source?

Eigenfaces has been around for a long time (since 1987) and it is open source. The way that you do eigenfaces is you have a training set of faces who have already been identified – you already know who they belong to. You turn each image into a matrix, so that it is just a grid of numbersthat all represent the pixel intensity. Next, across each image in the entire training set, you take the average and calculate the mathematical difference between each individual image. Once you have the average across the entire grid, you can then compare each new image with that average, and find which image has the closest difference. As in, if you are identifying someone for facial recognition purposes, the algorithm will compare the differences between the new image and the average image – the mean – and is able to recognize person A or person B and differentiate that from person C.

Interesting. Now, with the image-recognition capabilities of Eigenfaces and the smart bot built into Tinderbox, where else do you see this being applied? How do you hope others will use what you’ve built for crime, health and medicine?

There are two immediate applications. One is related to prediction technology for health and forensics. If you’re an investigator, say on a child pornography case, it may be useful to use the same facial recognition to identify a perpetrator. That’s more of a policing use, but from a health use- it’s not every day somebody gets their photo taken of them. They do put pictures of them on social media, and I’m personally very interested in looking at if I were to average each face, and compared that average of someone with a history of abuse to someone who does not, would be very interested to see whether there’s a pattern that you can say “ there’s definitely a facial structure change over time”. A lot of our emotions are communicated via facial expressions and it is known that your facial structure changes over time.

Another is business-related. Anyone who runs a dating site is interested in this, because anyone who runs a dating site wants to optimize their matching algorithms.

What are potential barriers in using something like Eigenfaces for recognizing abuse and what are ways to overcome these hurdles?

You’ve got a couple problems with the algorithm itself. The first one is, Eigenvectors only works with black and white images. If you wanted to do any analysis on images that are color-dependent, like a bruise, it might not actually work. When you take a discoloration and turn it to grayscale, it’s not going to be significant enough to stand out. You couldn’t use Eigenvectors or Eigenfaces for something like recognition of a bruise. You’d have to have a very sensitive set and it would probably be impractical.

Second, you need to have relatively consistent images. With Tinder, people generally take similar profile pictures, i.e., from the same angle. With Tinderbox, I had the algorithm only examine images where there was a single face (as opposed to group pictures), and where the picture was taken from a balanced perspective – with the person’s face pointed straight at the camera. If pictures of the person are not consistent, then the algorithm may not be as successful in correctly identifying an individual.

In terms of overcoming these hurdles and possibilities for future work, one of the most interesting results that came out of the Tinderbox project was how the Eigenfaces looked when you averaged them together. The uncanny black and white faces that you saw on my blog – those are a representation of the average of each face merged together. I had an a-ha moment, where I thought how this might be used to predict faces of individuals who may be under stress or may be being abused. It made me think, what does a person from a background of abuse look like in comparison to a control – to a person who is not being abused and who is happy in their own lives? This could be very powerful.

If indicators of abuse (facial expression, change over time) were contained within the face, then you might be successful in detecting those indicators and identifying something like abuse.

Pingback: forex trade

Pingback: pendaftaran cpns

Pingback: Pendaftaran CPNS Kemenkumham

Pingback: Event Management Company in Hyderabad

Pingback: pemandangan

Pingback: invitro pharamacology

Pingback: jogos friv

Pingback: kari satilir

Pingback: must watch

Pingback: bandar judi

Pingback: roof contractor near me

Pingback: Diyala Engineering

Pingback: online obedience training for dogs

Pingback: Stix Events

Pingback: In Vitro ADME Studies

Pingback: Pharmacokinetic Studies

Pingback: best CRO for analytical Chemistry outsourcing

Pingback: forex trading

Pingback: site link

Pingback: 루비게임 엘리트게임 루비게임주소

Pingback: home11

Pingback: hombre deporte

Pingback: 검증사이트

Pingback: satta matka, satta king, satta, matka, kalyan matka

Pingback: university of diyala

Pingback: college of coehuman

Pingback: hour

Pingback: steroids manufacturers

Pingback: Caco-2 services in India

Pingback: Bangalore Escorts

Pingback: Kolkata Escorts

Pingback: Goa Escorts

Pingback: Ambika Ahuja Jaipur Escorts

Pingback: NEHA TYAGI MODEL JAIPUR ESCORTS

Pingback: JAIPUR ESCORTS ALIYA SINHA

Pingback: BANGALORE COMPANION ESCORTS

Pingback: Dhruvi Jaipur Escorts

Pingback: JAIPUR ESCORTS MODEL DRISHYA

Pingback: Jiya Malik High Profile Jaipur Escorts Model

Pingback: FUN WITH JAIPUR ESCORTS PUJA KAUR

Pingback: XXX BANGALORE ESCORTS

Pingback: Selly Arora Independent Bangalore Escorts

Pingback: Enjoy With Jaipur Escorts Tanisha Walia

Pingback: RUBEENA RUSSIAN BANGALORE ESCORTS

Pingback: Bristy Roy Independent Bangalore Escorts

Pingback: Bangalore Escorts Sneha Despandey

Pingback: sbobet ibc

Pingback: Predrag Timotić

Pingback: Ruby Sen Kolkata Independent Escorts

Pingback: Diana Diaz Goa Independent Escorts Services

Pingback: Diksha Arya Independent Escorts Services in Kolkata

Pingback: Devika Kakkar Goa Escorts Services

Pingback: Rebecca Desuza Goa Independent Escorts Services

Pingback: Yamini Mittal Independent Escorts Services in Goa

Pingback: Simmi Mittal Kolkata Escorts Services

Pingback: Kolkata Escorts Services Ragini Mehta

Pingback: Navya Sharma Independent Kolkata Escorts Services

Pingback: Elisha Roy Goa Independent Escorts Services

Pingback: Alisha Oberoi Kolkata Escorts Services

Pingback: Divya Arora Goa Independent Escorts Services

Pingback: Simran Batra Independent Escorts in Kolkata

Pingback: Ashna Ahuja Escorts Services in Kolkata

Pingback: Sofia Desai Escorts Services in Goa

Pingback: Goa Escorts Services Drishti Goyal

Pingback: Mayra Khan Escorts Services in Kolkata

Pingback: Sruthi Pathak Escorts in Bangalore

Pingback: Ambika Ahuja Jaipur Escorts Services

Pingback: Sruthi Pathak Bangalore Female Escorts

Pingback: sirius latest movs257 abdu23na4074 abdu23na1

Pingback: sirius latest movs340 abdu23na5765 abdu23na92

Pingback: fknjn544a810 afeu23na114 abdu23na73

Pingback: Sruthi Pathak Bangalore Escorts Services

Pingback: Trully Independent Bangalore Escorts

Pingback: Trully Independent Bangalore Escorts Services

Pingback: Fiza Khan Kolkata Independent Call Girls Services

Pingback: Ruchika Roy Kolkata Escorts Call Girls Services

Pingback: Kolkata Escorts

Pingback: Escorts in Kolkata

Pingback: Fiza Khan Kolkata Independent Escorts Call Girls Services

Pingback: Fiza Khan Kolkata Call Girls Escorts Services

Pingback: Diksha Arya Kolkata Escorts Call Girls Services

Pingback: PoseÃdo Pelicula Completa Repelis

Pingback: Cheap

Pingback: Nidika Offer Call Girls in Bangalore

Pingback: Hyderabad Escorts Call Girls Services

Pingback: Bangalore Cheap Escorts Sevices

Pingback: Pune Escorts Services Call Girls

Pingback: Goa Escorts Call Girls Services

Pingback: Bangalore Escorts Services

Pingback: russian escorts in delhi

Pingback: ghaziabad escorts

Pingback: ghaziabad escorts